AI INFRASTRUCTURE

AI infrastructure aiming for world-leading speed, from FPGA to ASIC.

We improve AI application speed and efficiency through hardware acceleration. With FPGA/ASIC expertise, we support deployments from edge to cloud.

- Stage strategy: FPGA → IP core → ASIC

- Value indicators: 1 ms recognition / under 5 W

- Dedicated accelerators for CNN and LLM inference

What We Do

We provide CNN and LLM inference accelerators. We design and implement across the full path from FPGA to ASIC, supporting deployment from edge AI to data centers.

For CNN inference, we state up to 21x performance over Jetson baselines.

Technology

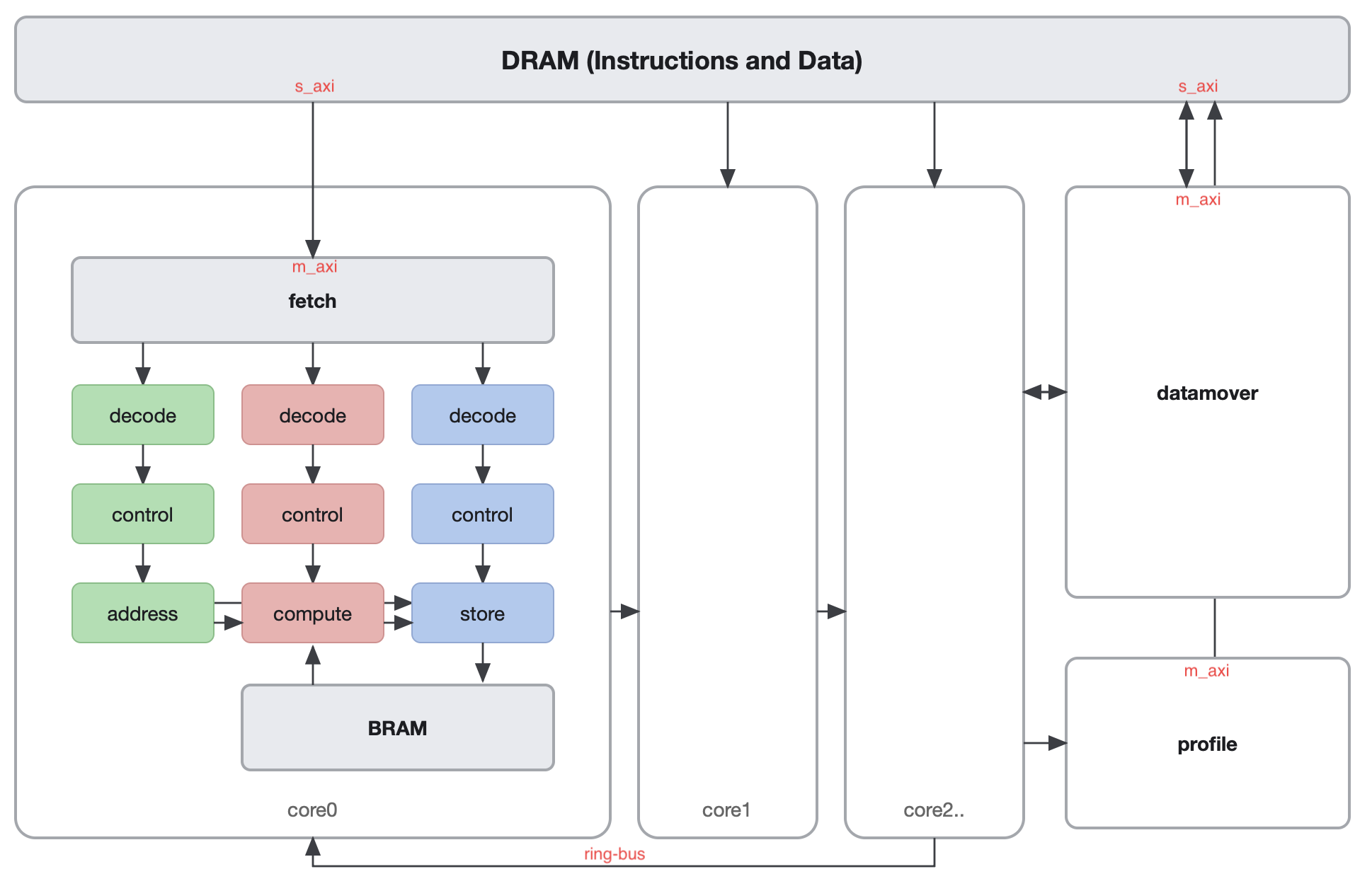

Our approach combines dataflow architecture with dedicated hardware design. By reducing CPU/GPU overhead and optimizing bandwidth and memory behavior by workload, we target both performance and power efficiency.

Wasabi2.0 is introduced as an integration of model compression, multicore architecture, and 8-bit quantization.

Use Cases / Applications

Primary targets include edge AI workloads that require low latency and low power, especially computer vision tasks. The roadmap states deployment from data center to edge.

Message from the Founder

Akira Jinguji serves as a researcher at the Processor Research Team, RIKEN Center for Computational Science, while also serving as Representative Director of SpiceEngine Inc. His research focuses on reconfigurable computing architectures for machine learning accelerators and extreme ultraviolet (EUV) lithography simulation.

Recent work includes numerical methods for accelerating optical analysis on supercomputers and large-scale GPU clusters, and co-optimization of inference and training using FPGA-centric reconfigurable processors. By leveraging sparse and low-memory-access data paths, he explores architectures that efficiently handle 3D EUV mask modeling and approximate neural network computation.

To translate research outcomes into industrial value, he founded SpiceEngine and continues to drive accelerator infrastructure from both software stack and hardware implementation perspectives.

- Apr 2024 - Present: Researcher, Processor Research Team, RIKEN Center for Computational Science

- May 2022 - Present: Representative Director, SpiceEngine Inc.

- Apr 2022 - Mar 2024: Assistant Professor, Takahashi Lab, School of Engineering, Tokyo Institute of Technology

- Apr 2020 - Mar 2022: Doctoral Program, Nakahara Lab, School of Engineering, Tokyo Institute of Technology

Company Status

Contact

For technical consultations and collaboration, please contact us via the form.